Xcode 26.3 Goes Agentic: Apple Keeps Developers Where It Wants Them

Xcode 26.3 unlocks the power of agentic coding

Xcode 26.3 introduces support for agentic coding, a new way in Xcode for developers to build apps, powered by coding agents from Anthropic and OpenAI.

Apple Newsroom

Apple Newsroom:

Xcode 26.3 integrates Anthropic's Claude Agent and OpenAI's Codex, enabling developers to leverage AI agents directly within the IDE for autonomous task completion.

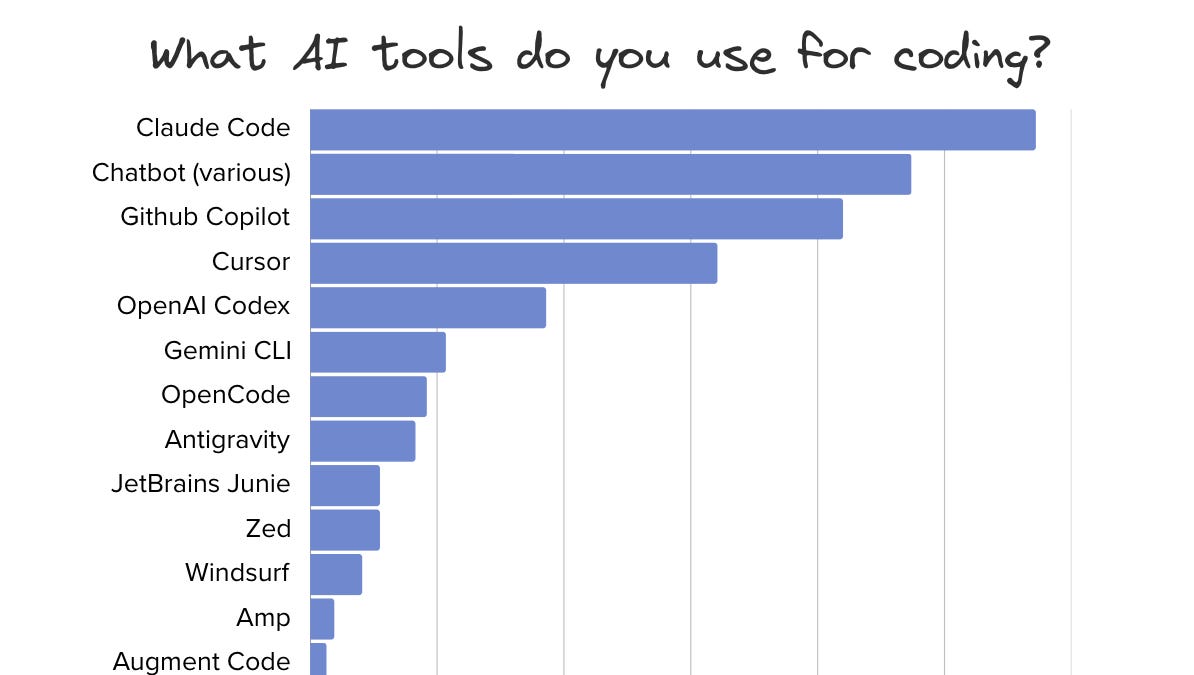

Over the past year, the biggest threat to Apple's developer ecosystem wasn't Flutter or React Native. It was Cursor. Developers were leaving Xcode not because they stopped building for Apple platforms, but because the AI tooling elsewhere was so much better that it was worth the tradeoff. Apple just closed that gap in a single release.

The real move here is MCP. By implementing a full Model Context Protocol server, Apple didn't just bolt on two AI providers. They made Xcode an open target for any MCP-compatible agent. Claude Code, Cursor, whatever comes next, they can all drive Xcode natively now. That's not how Apple usually plays it. They chose an open standard over a proprietary integration, which tells you how seriously they took the developer experience.

Agents can autonomously search documentation, explore file structures, modify project settings, capture and iterate through Xcode Previews, execute builds, and implement fixes based on feedback.

This is the part that matters for day-to-day work. Xcode Previews have always been SwiftUI's killer feature but also its biggest friction point: constant rebuilds, layout tweaks, state wrangling. Having an agent that can capture a preview, evaluate it, and iterate without you touching the keyboard is genuinely new. It's the kind of integration you can't replicate in VS Code because VS Code doesn't have Xcode Previews.

Apple's playbook has always been the same: make the first-party experience good enough that leaving isn't worth it.